Screenshot: Stacey Wales/YouTube

Nir Eisikovits, UMass Boston and Daniel J. Feldman, UMass Boston

Christopher Pelkey was shot and killed in a road range incident in 2021. On May 8, 2025, at the sentencing hearing for his killer, an AI video reconstruction of Pelkey delivered a victim impact statement. The trial judge reported being deeply moved by this performance and issued the maximum sentence for manslaughter.

As part of the ceremonies to mark Israel’s 77th year of independence on April 30, 2025, officials had planned to host a concert featuring four iconic Israeli singers. All four had died years earlier. The plan was to conjure them using AI-generated sound and video. The dead performers were supposed to sing alongside Yardena Arazi, a famous and still very much alive artist. In the end Arazi pulled out, citing the political atmosphere, and the event didn’t happen.

In April, the BBC created a deep-fake version of the famous mystery writer Agatha Christie to teach a “maestro course on writing.” Fake Agatha would instruct aspiring murder mystery authors and “inspire” their “writing journey.”

The use of artificial intelligence to “reanimate” the dead for a variety of purposes is quickly gaining traction. Over the past few years, we’ve been studying the moral implications of AI at the Center for Applied Ethics at the University of Massachusetts, Boston, and we find these AI reanimations to be morally problematic.

Before we address the moral challenges the technology raises, it’s important to distinguish AI reanimations, or deepfakes, from so-called griefbots. Griefbots are chatbots trained on large swaths of data the dead leave behind – social media posts, texts, emails, videos. These chatbots mimic how the departed used to communicate and are meant to make life easier for surviving relations. The deepfakes we are discussing here have other aims; they are meant to promote legal, political and educational causes.

Moral quandaries

The first moral quandary the technology raises has to do with consent: Would the deceased have agreed to do what their likeness is doing? Would the dead Israeli singers have wanted to sing at an Independence ceremony organized by the nation’s current government? Would Pelkey, the road-rage victim, be comfortable with the script his family wrote for his avatar to recite? What would Christie think about her AI double teaching that class?

The answers to these questions can only be deduced circumstantially – from examining the kinds of things the dead did and the views they expressed when alive. And one could ask if the answers even matter. If those in charge of the estates agree to the reanimations, isn’t the question settled? After all, such trustees are the legal representatives of the departed.

But putting aside the question of consent, a more fundamental question remains.

What do these reanimations do to the legacy and reputation of the dead? Doesn’t their reputation depend, to some extent, on the scarcity of appearance, on the fact that the dead can’t show up anymore? Dying can have a salutary effect on the reputation of prominent people; it was good for John F. Kennedy, and it was good for Israeli Prime Minister Yitzhak Rabin.

The fifth-century B.C. Athenian leader Pericles understood this well. In his famous Funeral Oration, delivered at the end of the first year of the Peloponnesian War, he asserts that a noble death can elevate one’s reputation and wash away their petty misdeeds. That is because the dead are beyond reach and their mystique grows postmortem. “Even extreme virtue will scarcely win you a reputation equal to” that of the dead, he insists.

Do AI reanimations devalue the currency of the dead by forcing them to keep popping up? Do they cheapen and destabilize their reputation by having them comment on events that happened long after their demise?

In addition, these AI representations can be a powerful tool to influence audiences for political or legal purposes. Bringing back a popular dead singer to legitimize a political event and reanimating a dead victim to offer testimony are acts intended to sway an audience’s judgment.

It’s one thing to channel a Churchill or a Roosevelt during a political speech by quoting them or even trying to sound like them. It’s another thing to have “them” speak alongside you. The potential of harnessing nostalgia is supercharged by this technology. Imagine, for example, what the Soviets, who literally worshipped Lenin’s dead body, would have done with a deep fake of their old icon.

Good intentions

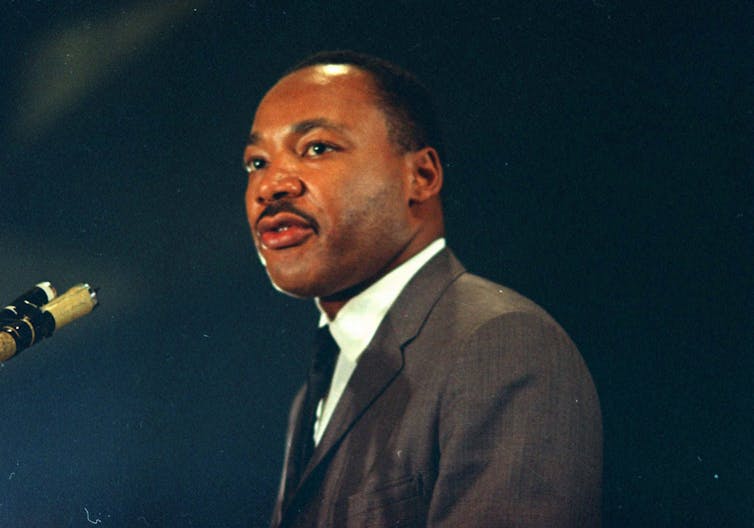

You could argue that because these reanimations are uniquely engaging, they can be used for virtuous purposes. Consider a reanimated Martin Luther King Jr., speaking to our currently polarized and divided nation, urging moderation and unity. Wouldn’t that be grand? Or what about a reanimated Mordechai Anielewicz, the commander of the Warsaw Ghetto uprising, speaking at the trial of a Holocaust denier like David Irving?

But do we know what MLK would have thought about our current political divisions? Do we know what Anielewicz would have thought about restrictions on pernicious speech? Does bravely campaigning for civil rights mean we should call upon the digital ghost of King to comment on the impact of populism? Does fearlessly fighting the Nazis mean we should dredge up the AI shadow of an old hero to comment on free speech in the digital age?

AP Photo/Chick Harrity

Even if the political projects these AI avatars served were consistent with the deceased’s views, the problem of manipulation – of using the psychological power of deepfakes to appeal to emotions – remains.

But what about enlisting AI Agatha Christie to teach a writing class? Deep fakes may indeed have salutary uses in educational settings. The likeness of Christie could make students more enthusiastic about writing. Fake Aristotle could improve the chances that students engage with his austere Nicomachean Ethics. AI Einstein could help those who want to study physics get their heads around general relativity.

But producing these fakes comes with a great deal of responsibility. After all, given how engaging they can be, it’s possible that the interactions with these representations will be all that students pay attention to, rather than serving as a gateway to exploring the subject further.

Living on in the living

In a poem written in memory of W.B. Yeats, W.H. Auden tells us that, after the poet’s death, Yeats “became his admirers.” His memory was now “scattered among a hundred cities,” and his work subject to endless interpretation: “the words of a dead man are modified in the guts of the living.”

The dead live on in the many ways we reinterpret their words and works. Auden did that to Yeats, and we’re doing it to Auden right here. That’s how people stay in touch with those who are gone. In the end, we believe that using technological prowess to concretely bring them back disrespects them and, perhaps more importantly, is an act of disrespect to ourselves – to our capacity to abstract, think and imagine.

Nir Eisikovits, Professor of Philosophy and Director, Applied Ethics Center, UMass Boston and Daniel J. Feldman, Senior Research Fellow, Applied Ethics Center, UMass Boston

This article is republished from The Conversation under a Creative Commons license. Read the original article.