child education

NASA Selects 21 New Learning Projects to Engage Students in STEM

Last Updated on July 27, 2024 by Daily News Staff

Credits: NASA

NASA is awarding more than $3.8 million to 21 museums, science centers, and other informal education institutions for projects designed to bring the excitement of space science to communities across the nation and broaden student participation in STEM (science, technology, engineering, and mathematics).

Projects were selected for NASA’s Teams Engaging Affiliated Museums and Informal Institutions (TEAM II) program and TEAM II Community Anchor Awards. Both are funded through NASA’s Next Generation STEM (Next Gen STEM), which supports kindergarten to 12-grade students, caregivers, and formal and informal educators in engaging the Artemis Generation in the agency’s missions and discoveries. The selected projects will engage their communities in a wide variety of STEM topics, from aeronautics and Earth science to human space exploration.

TEAM II: NASA-Based Learning Opportunities

NASA’s vision for TEAM II is to enhance the capability of informal education institutions to host NASA-based learning activities while increasing the institutions’ capacity to use innovative tools and platforms to bring NASA resources to students. The agency has selected four institutions to receive approximately $3.2 million in cooperative agreements for projects they will implement during the next three years.

The selected institutions and their proposed projects are:

- Universities Space Research Association, Columbia, Maryland

Virtual Trips to Extreme Environments - Michigan Science Center, Detroit, Michigan

Urban Skies – Equitable Universe: Using Open Space to Empower Youth to Explore Their Solar System and Beyond - Museum of Science, Boston, Massachusetts

UNITED (Unveiling NASA’s Inspirational Tales of Exploration and Discovery) - University Corporation for Atmospheric Research, Boulder, Colorado

Using a Network of Ozone Bioindicator Gardens to Engage Communities on Air Quality and NASA’s TEMPO Mission

Community Anchors: Local Connections to NASA

The designation as a Community Anchor recognizes institutions as local hubs bringing NASA STEM and space science to students and families in traditionally underserved areas. The agency has selected 17 institutions to receive more than $660,000 in grants to help make these one- to two-year projects a reality, enhancing the local impact and strengthening their ability to build sustainable connections between their communities and NASA.

The selected institutions and their proposed projects are:

- St. Anna’s Episcopal Church, New Orleans, Louisiana

Communicating Our Future For Education Expansion (COFFEE) - Frontiers of Flight Museum, Inc., Dallas, Texas

Youth STEM Initiative – STEM Leaders in Education - Children’s Museum of Indianapolis, Inc., Indianapolis, Indiana

Our Earth From Above - Pacific Science Center Foundation, Seattle, Washington

Connecting Youth to the Journey of Human Space Flight - National Space Science & Technology Institute, Colorado Springs, Colorado

Mobile Earth + Space Observatory Science Experiences for Engaging Rural Students - Board of Regents of the University of Nebraska, Lincoln, Nebraska

Because I’m Earth it: A NebrASkA Experience - Pajarito Environmental Education Center, Los Alamos, New Mexico

Exploring STEM Opportunities from New Mexico to the Solar System - Scienceworks Hands-On Museum, Ashland, Oregon

ScienceWorks Robotics in Space Program - City of Manhattan, Kansas

Flying Cleaner and Faster: Connecting Kansas Kids to the Future of Aviation - Northern Kentucky University, Highland Heights, Kentucky

Afterschool NASA Production Club - Utah State University, Logan, Utah

4-H Moon to Mars Tetrathlon - New York Hall of Science, Queens, New York

Connecting Communities to Real Time Astronomy Phenomena: Solar Eclipse 2024 - Monterey Institute for Research In Astronomy, Marina, California

MIRA la Luna: Igniting Interest in STEM for Middle School Students of the Salinas Valley - Infinity Science Center, Inc., Pearlington, Mississippi

Outreach STEM Education: Bringing NASA STEM Education to local communities through local county library systems and INFINITY Science Center - Sierra Nevada Journeys, Reno, Nevada

NASA Family STEM Nights - Union Station Kansas City, Inc., Kansas City, Missouri

Union Station Kansas City Inc NASA Team II Proposal - Eugene Science Center Inc., Eugene, Oregon

Sky’s The Limit: Access to Portable Planetarium Experiences for Rural and Title I Schools to Address Disparity in STEM Proficiency

Next Gen STEM is a project within NASA’s Office of STEM Engagement, which develops unique resources and experiences to spark student interest in STEM and build a skilled and diverse next generation workforce. For the latest NASA STEM events, activities, and news, visit:

Source: NASA

Our Lifestyle section on STM Daily News is a hub of inspiration and practical information, offering a range of articles that touch on various aspects of daily life. From tips on family finances to guides for maintaining health and wellness, we strive to empower our readers with knowledge and resources to enhance their lifestyles. Whether you’re seeking outdoor activity ideas, fashion trends, or travel recommendations, our lifestyle section has got you covered. Visit us today at https://stmdailynews.com/category/lifestyle/ and embark on a journey of discovery and self-improvement.

News

Children can be systematic problem-solvers at younger ages than psychologists had thought – new research

Child psychologists: Celeste Kidd’s research challenges long-standing ideas from Jean Piaget about children’s problem-solving abilities. Her findings show that children as young as four can independently utilize algorithmic strategies to solve complex tasks, contradicting the belief that systematic logical thinking develops only after age seven. This insight highlights the importance of nurturing algorithmic thinking in early education.

Last Updated on March 16, 2026 by Daily News Staff

Celeste Kidd, University of California, Berkeley

I’m in a coffee shop when a young child dumps out his mother’s bag in search of fruit snacks. The contents spill onto the table, bench and floor. It’s a chaotic – but functional – solution to the problem.

Children have a penchant for unconventional thinking that, at first glance, can look disordered. This kind of apparently chaotic behavior served as the inspiration for developmental psychologist Jean Piaget’s best-known theory: that children construct their knowledge through experience and must pass through four sequential stages, the first two of which lack the ability to use structured logic.

Piaget remains the GOAT of developmental psychology. He fundamentally and forever changed the world’s view of children by showing that kids do not enter the world with the same conceptual building blocks as adults, but must construct them through experience. No one before or since has amassed such a catalog of quirky child behaviors that researchers even today can replicate within individual children.

While Piaget was certainly correct in observing that children engage in a host of unusual behaviors, my lab recently uncovered evidence that upends some long-standing assumptions about the limits of children’s logical capabilities that originated with his work. Our new paper in the journal Nature Human Behaviour describes how young children are capable of finding systematic solutions to complex problems without any instruction. https://www.youtube.com/embed/Qb4TPj1pxzQ?wmode=transparent&start=0 Jean Piaget describes how children of different ages tackle a sorting task, with varying success.

Putting things in order

Throughout the 1960s, Piaget observed that young children rely on clunky trial-and-error methods rather than systematic strategies when attempting to order objects according to some continuous quantitative dimension, like length. For instance, a 4-year-old child asked to organize sticks from shortest to longest will move them around randomly and usually not achieve the desired final order.

Psychologists have interpreted young children’s inefficient behavior in this kind of ordering task – what we call a seriation task – as an indicator that kids can’t use systematic strategies in problem-solving until at least age 7.

Somewhat counterintuitively, my colleagues and I found that increasing the difficulty and cognitive demands of the seriation task actually prompted young children to discover and use algorithmic solutions to solve it.

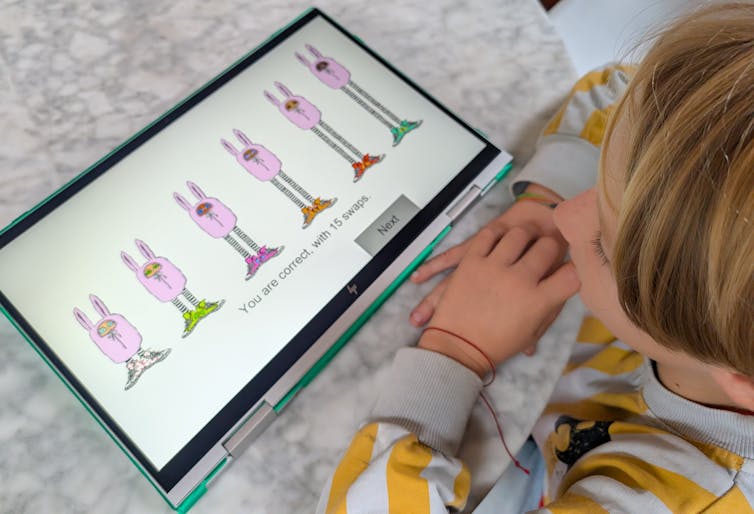

Piaget’s classic study asked children to put some visible items like wooden sticks in order by height. Huiwen Alex Yang, a psychology Ph.D. candidate who works on computational models of learning in my lab, cranked up the difficulty for our version of the task. With advice from our collaborator Bill Thompson, Yang designed a computer game that required children to use feedback clues to infer the height order of items hidden behind a wall, .

The game asked children to order bunnylike creatures from shortest to tallest by clicking on their sneakers to swap their places. The creatures only changed places if they were in the wrong order; otherwise they stayed put. Because they could only see the bunnies’ shoes and not their heights, children had to rely on logical inference rather than direct observation to solve the task. Yang tested 123 children between the ages of 4 and 10. https://www.youtube.com/embed/GlsbcE6nOxk?wmode=transparent&start=0 Researcher Huiwen Alex Yang tests 8-year-old Miro on the bunny sorting task. The bunnies are hidden behind a wall with only their sneakers visible. Miro’s selections exemplify use of selection sort, a classic efficient sorting algorithm from computer science. Kidd Lab at UC Berkeley.

Figuring out a strategy

We found that children independently discovered and applied at least two well-known sorting algorithms. These strategies – called selection sort and shaker sort – are typically studied in computer science.

More than half the children we tested demonstrated evidence of structured algorithmic thinking, and at ages as young as 4 years old. While older kids were more likely to use algorithmic strategies, our finding contrasts with Piaget’s belief that children were incapable of this kind of systematic strategizing before 7 years of age. He thought kids needed to reach what he called the concrete operational stage of development first.

Our results suggest that children are actually capable of spontaneous logical strategy discovery much earlier when circumstances require it. In our task, a trial-and-error strategy could not work because the objects to be ordered were not directly observable; children could not rely on perceptual feedback.

Explaining our results requires a more nuanced interpretation of Piaget’s original data. While children may still favor apparently less logical solutions to problems during the first two Piagetian stages, it’s not because they are incapable of doing otherwise if the situation requires it.

A systematic approach to life

Algorithmic thinking is crucial not only in high-level math classes, but also in everyday life. Imagine that you need to bake two dozen cookies, but your go-to recipe yields only one. You could go through all the steps of making the recipe twice, washing the bowl in between, but you’d never do that because you know that would be inefficient. Instead, you’d double the ingredients and perform each step only once. Algorithmic thinking allows you to identify a systematic way of approaching the need for twice as many cookies that improves the efficiency of your baking.

Algorithmic thinking is an important capacity that’s useful to children as they learn to move and operate in the world – and we now know they have access to these abilities far earlier than psychologists had believed.

That children can engage with algorithmic thinking before formal instruction has important implications for STEM – science, technology, engineering and math –education. Caregivers and educators now need to reconsider when and how they give children the opportunity to tackle more abstract problems and concepts. Knowing that children’s minds are ready for structured problems as early as preschool means we can nurture these abilities earlier in support of stronger math and computational skills.

And have some patience next time you encounter children interacting with the world in ways that are perhaps not super convenient. As you pick up your belongings from a café floor, remember that it’s all part of how children construct their knowledge. Those seemingly chaotic kids are on their way to more obviously logical behavior soon.

Celeste Kidd, Professor of Psychology, University of California, Berkeley

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Dive into “The Knowledge,” where curiosity meets clarity. This playlist, in collaboration with STMDailyNews.com, is designed for viewers who value historical accuracy and insightful learning. Our short videos, ranging from 30 seconds to a minute and a half, make complex subjects easy to grasp in no time. Covering everything from historical events to contemporary processes and entertainment, “The Knowledge” bridges the past with the present. In a world where information is abundant yet often misused, our series aims to guide you through the noise, preserving vital knowledge and truths that shape our lives today. Perfect for curious minds eager to discover the ‘why’ and ‘how’ of everything around us. Subscribe and join in as we explore the facts that matter. https://stmdailynews.com/the-knowledge/

Entertainment

Smart Gaming: How Parents Can Keep Kids Safe Online

Parents can enhance kids’ safety during online gaming by using privacy settings, researching games, enabling age checks, keeping personal information private, and utilizing parental controls and security tools.

Last Updated on March 14, 2026 by Daily News Staff

Smart Gaming: How Parents Can Keep Kids Safe Online

(Family Features) Playing video games can be a fun, social experience. However, online gaming also poses real risks, especially for kids. As a parent, you don’t necessarily need to be a gamer yourself to help keep your children safe when the controller is in their hands.

Consider taking proactive steps like these to create a healthy online gaming environment for kids of all ages.

Check System Privacy Settings

As a first line of defense – before your child even starts gaming – spend some time in the device or console privacy settings. Here you can turn off sharing, disable location tracking, limit microphone and camera access and restrict how other users can interact with your child’s profile. Similarly, many games and platforms include built-in privacy settings that can be tailored to your child’s age and online experience. These settings may allow you to limit who can view your child’s profile or send a friend request, message or voice chat.

Research Games

Because not all games are created equal, look up game ratings through a service such as ESRB before buying or downloading to understand the maturity level of the game and determine if it’s appropriate for your child. To take it a step further, read reviews from other parents or watch gameplay videos to see if you deem not only the content but also the social interaction acceptable.

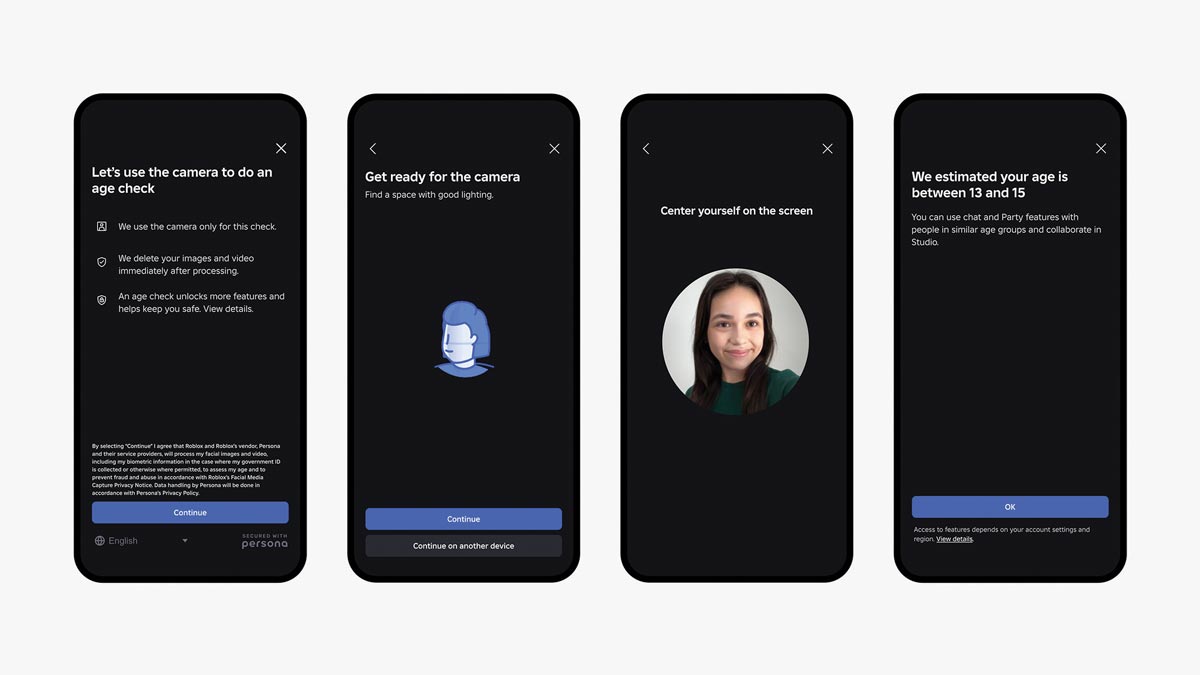

Use Facial Age Estimation

Online platforms are increasingly looking for ways to keep users safe, and that includes added levels of verification. As part of a multilayered approach to safety, Roblox is the first online gaming platform to require age checks for users of all ages to access chat features, enabling age-appropriate communication and limiting conversations between adults and minors. These secure age checks are designed to be fast, easy and secure using Facial Age Estimation technology directly within the app.

“Our commitment to safety is rooted in delivering the highest level of protection for our users,” said Matt Kaufman, chief safety officer at Roblox. “By building proactive, age-based barriers, we can empower users to create and connect in ways that are both safe and appropriate.”

Once age-checked, users are assigned to one of six age groups: under 9, 9-12, 13-15, 16-17, 18-20 or 21 and older, ensuring conversations are safe and age appropriate. Age checks are optional; however, features like chat will not be accessible unless an age check is completed. Chat is also turned off by default for children under age 9, unless a parent provides consent after an age check.

Keep Personal Information Private

It’s seldom a bad idea to be extra cautious when interacting with strangers online, even if they seem friendly enough while playing the game. Teach children what information not to share, including their full name, address, birthday, school name, phone number, email address, passwords or any photos that may contain any personal information (like a house number or school logo) in the background. Also encourage a screen name and generic avatar for added privacy.

Turn on Parental Controls

Designed to allow parents a supervisory role in their child’s online gaming experience, parental controls on many platforms include the ability to set schedules and limit playtime, restrict access to certain content or social features, require a password for purchases or set a spending limit.

Avoid Clicking Unfamiliar Links

Player profiles and in-game chats may include links to external sites, including those promising rewards or cheat codes. Because they can be used to gain access to personal information, remind your children to ask an adult before clicking any unfamiliar links while gaming so they can be verified as trustworthy.

Employ Privacy and Security Tools

While system or console-specific settings allow parents to set content restrictions, approve downloads, manage friends lists and more, additional layers of security are sometimes necessary. Extra safeguards such as antivirus and internet security software, DNS (domain name system) filtering and two-factor authentication can also be enabled to help keep kids safe online.

For more tools to help parents make informed decisions and support their children’s gaming experience, visit corp.roblox.com/safety.

Photo courtesy of Shutterstock (father and daughter playing video game)

SOURCE:

Roblox

Our Lifestyle section on STM Daily News is a hub of inspiration and practical information, offering a range of articles that touch on various aspects of daily life. From tips on family finances to guides for maintaining health and wellness, we strive to empower our readers with knowledge and resources to enhance their lifestyles. Whether you’re seeking outdoor activity ideas, fashion trends, or travel recommendations, our lifestyle section has got you covered. Visit us today at https://stmdailynews.com/category/lifestyle/ and embark on a journey of discovery and self-improvement.

Lifestyle

Preparing Students for What’s Next in Work

Preparing Students: Automation, AI and societal economic changes are affecting the workforce and making a significant impact on the employment prospects of future generations. Consider this guidance to put students on the path toward greater earning potential and economic mobility in a rapidly changing economy.

Preparing Students for What’s Next in Work

(Family Features) Automation, AI and societal economic changes are affecting the workforce and making a significant impact on the employment prospects of future generations.

More than one-third of today’s college graduates are “underemployed,” meaning they work jobs that don’t require a college degree and may pay less than a living wage, according to data from the Federal Reserve Bank of New York.

At the same time, a World Economic Forum report explored how advances in AI are threatening to negatively impact access to entry-level and even mid-level jobs for millions of Americans.

Looking ahead, research by Georgetown University indicates that by 2031, 70% of jobs will require education or training beyond high school. However, data from the National Center for Education Statistics indicate only one-third of high school graduates go on to complete a college degree with many of those being in fields that are not in high-earning, high-growth professions.

These challenges are not lost on today’s students. In a survey by Junior Achievement and Citizens, 57% of teens reported AI has negatively impacted their career outlook, raising concerns about job replacement and the need for new skills. What’s more, a strong majority (87%) expect to earn extra income through side hustles, gig work or social media content creation.

“To put students on the path toward greater earning potential and economic mobility in a rapidly changing economy, students need proactive education and exposure to transferable skills and competencies, such as creative and critical thinking, financial literacy, problem-solving, collaboration and career planning,” said Jack Harris, CEO, Junior Achievement.

This assertion is consistent with findings from the Camber Collective. This social impact consulting group identified four key life experiences students can consider and explore that positively affect lifetime earnings, including:

- Completing secondary education

- Graduating with a degree in a high-paying field of study

- Receiving mentorship during adolescence

- Obtaining a first full-time job with opportunity for advancement

Students aiming to equip themselves with the skills and experience necessary for the future workforce can seek:

- Learning opportunities that are designed with the future in mind. For example, learning experiences offered through Junior Achievement reflect the skills and competencies needed to promote economic mobility.

- Internships or apprenticeships that provide hands-on experience and exposure to a career field that can’t be found in a textbook.

- Volunteer or extracurricular roles that develop communication and leadership skills. Virtually every career field requires these soft skills for growth and greater earning potential.

- Relationships that provide insight and connection. Networking with individuals who are already excelling in a chosen field, as well as peers who share similar aspirations, offers perspective from those who are where you wish to be and potentially opens future doors for employment.

- Courses that offer introductory insight into a chosen career path. Local trade or technical schools and other training organizations may even offer certifications that align with a student’s area of interest.

To learn more about how students can pursue education for what’s next, visit JA.org.

SOURCE: